Lesson planning is a big part of any teacher’s day to day work.

One thing we’ve been exploring is ways could reduce this workload, while helping to improve curriculum quality.

We designed the ‘Manage key stage 2 and 3 curriculum resources’ service to do just that. It’s a site that gives teachers a high quality plan for their curriculum, and all the resources they need to deliver it. Instead of creating lessons from scratch, a teacher's time is focused on where they can add value - tailoring for their class and adapting to individual pupil needs.

Last month the team went through the new Government Service Standard's alpha assessment. Ours was the first service to pass.

Here we talk about our experience of preparing for and going through the assessment, as the first service team in government to do so.

Choosing to use the new service standard

From a Government Digital Service (GDS) point of view, our service is not typical. Under the old service standard - designed for big transactional services like the original 25 transformation exemplars - it would have been a stretch to even call ours a service. We’re not digitising an existing service - this is brand new. It’s not transactional, it’s behind a sign-in and it’s not public. The service is entirely discretionary - teachers don’t have to use it.

More relevant to our service

When arranging our assessment, we were given a choice of which standard to be assessed against. For our product, which you could classify as content-as-a-service, we felt the new service standard was a much better fit. It’s a service a teacher may use many 100s of times a year. And unlike one self-contained transaction, like applying for a driving license, it’s never really 'done'.

Understanding the changes in the assessment criteria

Our Delivery Manager and I went through the updated standard and guidance meticulously. For good measure I swotted up on everything Lou Downe, former Director of Design and Service Standards for the UK Government, has said about what makes a good service.

There are 2 significant changes in the criteria that are specifically relevant to our service and helped us decide to be assessed against the new service standard.

Accessibility

There’s now more emphasis on accessibility. We were especially keen to make sure the service worked for neurodivergent teachers who are often excluded from traditional accessibility testing.

Common components

We can now use common components in government other than those specified in the GOV.UK design system. This aligns with our needs as a service team because we wanted to use experimental components from across the wider government design community to better meet our users’ needs.

We had daily check-ins to prepare

We knew it would be a challenge - the team had been through service assessments before, but not to the new standard, and not with a service as different as this. So we started planning.

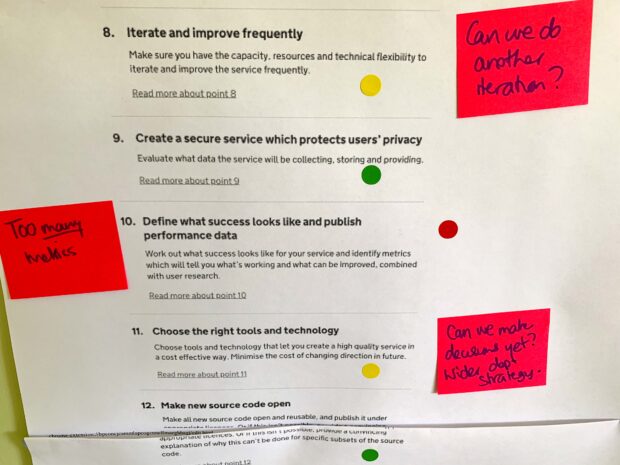

We mapped the work we’d done - and planned to do - against print outs of the new service standard.

In the run up to the assessment we met daily to see how we were doing against the standard. We categorised each service point based on whether we thought we’d met it. Each point was given a traffic light colour - red for work still to do, amber for nearly finished and green for done.

After a mock assessment we were ready for the real thing.

How the assessment went

How was this different? Not massively. As you’d expect, the questions were challenging. They interrogated our knowledge of service users and their needs, and went straight to the heart of our service.

But the approach felt more flexible and reflected the reality of designing complex end to end services.

If you’ve run your alpha properly and are well prepared, you shouldn’t have any problems working to the new standard. On the other hand, if your team has jumped to a solution too early, or haven’t tested the riskiest parts of your end to end service, you may feel more exposed.

What’s next?

We passed, so it's full steam ahead. The department is preparing to move the service into private beta ready for testing during the autumn term. We’ll keep you posted on how it goes.

Find out more by getting in touch with Audree - you can follow her on twitter.

Sign up to receive our blog posts.

1 comment

Comment by Andy Parker posted on

As the Lead researcher on this project, I'd like to extend my thoughts on the experience.

Our team, worked in a user-centred way. Being this is the most critical element of not just service design as a practice, but the GDS standards, meant we set ourselves up for success from day 1.

This is so important and easily forgotten. Within each sprint, prior to the amended standards, we asked ourselves how we could reflect them within our approach to understanding and delivering in the space.

Having everyone invovled and thinking in this way, may the challenging nature of the task at hand one which we were aligned to and felt good about each week.

It cannot be understated how positive and encouraging Audrey and our policy leads were throughout. ???